Alex Constantine - February 1, 2009

Foreword - In-Q-Tel Investments are a Map of the Future of Spying: " ... A relatively unknown branch of the CIA is investing millions of taxpayer dollars in technology startups that, together, paint a map for the future of spying. Some of these technologies can pry into the personal lives of Americans not just for the government but for big businesses as well. ... More than 60 percent of In-Q-Tel’s current investments are in companies that specialize in automatically collecting, sifting through and understanding oceans of information, according to an analysis by the Medill School of Journalism. ... "

Foreword - In-Q-Tel Investments are a Map of the Future of Spying: " ... A relatively unknown branch of the CIA is investing millions of taxpayer dollars in technology startups that, together, paint a map for the future of spying. Some of these technologies can pry into the personal lives of Americans not just for the government but for big businesses as well. ... More than 60 percent of In-Q-Tel’s current investments are in companies that specialize in automatically collecting, sifting through and understanding oceans of information, according to an analysis by the Medill School of Journalism. ... "

http://newsinitiative.org/story/2006/09/01/the_future_of_spying

Non Obvious Relationship Awareness

by Tim O'Reilly

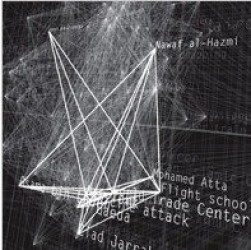

The most interesting person I met this year at PC Forum was Jeff Jonas, founder of System Research and Development (SRD), the data mining company that made its name in Las Vegas with a technology called NORA (Non-Obvious Relationship Awareness) -- software that would alert casino security, for instance, that the dealer at table 11 once shared a phone number with the guy who is winning big at that same table.

The most interesting person I met this year at PC Forum was Jeff Jonas, founder of System Research and Development (SRD), the data mining company that made its name in Las Vegas with a technology called NORA (Non-Obvious Relationship Awareness) -- software that would alert casino security, for instance, that the dealer at table 11 once shared a phone number with the guy who is winning big at that same table.

As you can imagine, the government came knocking after 9/11. SRD got funding from In-Q-Tel, the CIA's venture fund, and was acquired by IBM earlier this year. Jeff is now an IBM distinguished engineer and chief scientist of IBM's Entity Analytics division.

His current focus is "anonymous entity resolution" -- the ability to share sensitive data without actually revealing it. That is, by using one-way hashes, you can look across various databases for a match without actually pooling all the data and making it available to all. As you can imagine, solving this problem is fairly critical to the government if they want "total information awareness" while maintaining citizen privacy and some semblance of civil liberties.

I also find this idea fascinating with regard to social networking. As I've noted in my talks for the past couple of years, social networking as currently practiced by services like Friendster, Orkut, and LinkedIn is really a "hack." (This is a good thing.) Much as screen scraping was a hack that showed the way to web services, current social networking apps point us towards a future in which we've truly reinvented the address book for the age of the internet. Why should we have to ask people if they will be our friends, and refer dates or jobs to us? Our true social networking applications -- our email, our IM, and our phones -- already know who our friends are. Microsoft Research's Wallop project is a step in the right direction -- a tool that lets us visualize and manage our communications web -- but it only extends to first degree connections. What anonymous entity resolution would allow is an application that extends the Wallop idea to a full six degrees by comparing data across address books without actually sharing the addresses themselves unless the owner was willing.

Of course, this could be bad for highly connected people. I already know that Linda Stone is my shortest path to almost anybody, but once all my contacts know that as well, Linda might just have to go hide under a rock.

Still, just as Napster unleashed a music revolution by choosing an unorthodox default (if you download, you make your computer available as a server as well), I believe that "opt out" rather than "opt in" is the trigger that will allow social networking to achieve its full potential as one of the core "Web 2.0" applications.

But back to NORA. A lot of what we do at O'Reilly is driven by pattern recognition, watching emerging trends, and deciding on the right point where adding a strong dose of information to the mix (books, conferences, advocacy) will help some important new idea reach a wider audience and hopefully reach its full potential. Mostly we do this pattern recognition by talking to cool people ("alpha geeks") but we also do some data mining ourselves. But as Jeff points out, most current data mining efforts are rather like a game of Go Fish. (For example, in the intelligence context, "Do you have an Osama? No. Well, then, do you have a Saddam?") Instead, he says, we need "fire and forget" queries, that return whenever they have data. (I also believe strongly in visualization tools like the ones we're building in our own research group, tools that let you see aggregate patterns and trends.)

At any rate, Jeff's definitely one of the movers and shakers of one of the areas that I believe is going to have a huge impact going forward. He's also an O'Reilly kind of guy -- a high school dropout, a self-taught hacker who developed software that a lot of PhDs told him couldn't be done.

http://radar.oreilly.com/2005/04/non-obvious-relationship-aware.html

•••

DATA DIVER DISSES TERROR-MINING

www.defensetech.org

Jeff Jonas is one of the country's leading practitioners of the dark art of data analysis. Casino chiefs and government spooks alike have used his CIA-funded "Non-Obvious Relationship Awareness" software to scour databases for hidden connections.

So you'd think that Jonas would be all into the idea of using these data-mining systems to predict who the next terrorist attacker might be.

Think again. "Though data mining has many valuable uses, it is not well suited to the terrorist discovery problem," he writes in a new study, co-authored with the Cato Institute's Jim Harper. "This use of data mining would waste taxpayer dollars, needlessly infringe on privacy and civil liberties, and misdirect the valuable time and energy of the men and women in the national security community." Are you listening, NSA?

Jonas doesn't have a problem cobbling together information on suspects from various databases. It's using these databases to forecast a terrorist's behavior -- think market research, but for Al-Qaeda -- that Jonas hates. "The possible benefits of predictive data mining for finding planning or preparation for terrorism are minimal. The financial costs, wasted effort, and threats to privacy and civil liberties are potentially vast," he writes.

One of the fundamental underpinnings of predictive data mining in the commercial sector is the use of training patterns. Corporations that study consumer behavior have millions of patterns that they can draw upon to profile their typical or ideal consumer. Even when data mining is used to seek out instances of identity and credit card fraud, this relies on models constructed using many thousands of known examples of fraud per year.

Terrorism has no similar indicia. With a relatively small number of attempts every year and only one or two major terrorist incidents every few years—each one distinct in terms of planning and execution—there are no meaningful patterns that show what behavior indicates planning or preparation for terrorism. Unlike consumers’ shopping habits and financial fraud, terrorism does not occur with enough frequency to enable the creation of valid predictive models. Predictive data mining for the purpose of turning up terrorist planning using all available demographic and transactional data points will produce no better results than the highly sophisticated commercial data mining done today [with results in the low single-digits – ed.]. The one thing predictable about predictive data mining for terrorism is that it would be consistently wrong.

Without patterns to use, one fallback for terrorism data mining is the idea that any anomaly may provide the basis for investigation of terrorism planning. Given a “typical” American pattern of Internet use, phone calling, doctor visits, purchases, travel, reading, and so on, perhaps all outliers merit some level of investigation. This theory is offensive to traditional American freedom, because in the United States everyone can and should be an “outlier” in some sense. More concretely, though, using data mining in this way could be worse than searching at random; terrorists could defeat it by acting as normally as possible.

Treating “anomalous” behavior as suspicious may appear scientific, but, without patterns to look for, the design of a search algorithm based on anomaly is no more likely to turn up terrorists than twisting the end of a kaleidoscope is likely to draw an image of the Mona Lisa.

Civil libertarians and bloggers have talked 'til they're blue in the face about how lame this kind of terror-predicting is. But I don't think I've ever heard a giant of the field, like Jonas, come out against the practice -- at least not on-the-record. Let's hope this is one conversation that the feds are monitoring.

UPDATE 11:49 AM: Shane Harris here. Die-hard proponents of pattern-based 'data mining' to catch terrorists will remain unconvinced by Jonas' and Harper's argument. While it's true that data mining in the commercial sector is based upon "training patterns," backers of systems such as Total Information Awareness will say, yes, and that's why data mining for terrorists has to start with hundreds -- maybe thousands -- of known or potential terrorist patterns to look for. A major part of TIA research was the creation of terrorist attack templates through red teaming exercises, in which experts were paid to come up with devious and clandestine plots that a terrorist might conceivably attempt. Their various machinations would, presumably, leave a set of digital footprints -- airline tickets purchased, money wired, hotels paid for, and so on -- and THAT data would be mined for clues.

What's also interesting about this paper is the combination of the authors. Jim Harper is a well-known and articulate activist, and has long since staked out central territory in the security vs. privacy debate. But Jonas has stayed out of politics. Indeed, those who've met him will know that he sticks out like a sore West coast thumb among Washington gear heads, being unafraid to use the word "dude" in formal conversation and happily acknowledging his ignorance of most Beltway insider baseball. But those who know Jonas and have heard him speak about electronic terrorist hunting know that, like his co-author Harper, he has a strong libertarian streak. Maybe Jonas wouldn't put it quite that way -- dude -- but it's there.

http://www.defensetech.org/archives/cat_data_diving.html